You spent six figures on a camera system. You have 4K resolution, wide-angle lenses covering every blind spot, and a VMS that your integrator swore was “enterprise-grade.” And then an incident happened. The footage was there. The detection event was logged. Eleven seconds after the fact. What you need is edge AI security cameras.

Sound familiar? You’re not alone.

The conversation happening on the show floor at ISC West 2026 isn’t about megapixels anymore. It’s about where the thinking happens. And whether your camera is smart enough to act before it has to ask permission from a server a thousand miles away.

When Milliseconds Matter More Than Megapixels

Here’s the uncomfortable math: a cloud-dependent camera sees something, packages that data, ships it off-site, waits for a compute response, and then acts. In an access control or physical threat scenario, that round trip can cost you precious seconds.

Edge AI changes the equation entirely.

When the inference runs on the chip inside the camera itself, latency collapses. The device isn’t asking the cloud for an answer. It already has one.

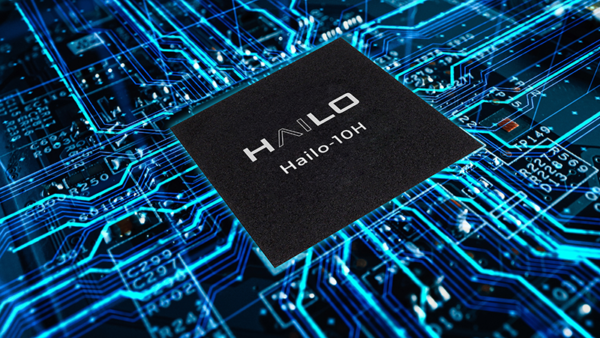

This isn’t theoretical anymore. Chipmakers like Hailo, Qualcomm, and others are now producing AI accelerator SoCs (system on chip) specifically engineered to run demanding neural networks at the device level, in real time, at low power. Hailo’s latest AI SoC, for example, is rated at 20 TOPS (trillion operations per second) and is already inside cameras deployed in production security environments globally.

The speed argument is compelling. But the availability argument might be even more important to your team.

What happens to your security posture when your internet goes down? When the data center has a maintenance window? When a natural disaster saturates bandwidth across your region? A camera that depends on the cloud for its intelligence is, in that moment, essentially dumb glass. An edge-AI camera keeps working — because the brain is local.

Specify “Edge Inference” in Your Next RFP

When evaluating camera systems, don’t just ask about AI features. Ask where those features run. Request documentation confirming that detection, classification, and alerting happen on-device without requiring a cloud API call. If a vendor can’t answer that cleanly, you have your answer.

Here’s the hard truth that rarely makes it into a vendor slide deck:

Every frame of video your cloud-dependent camera transmits is data leaving your building. In an era of GDPR, CCPA, BIPA, and a growing patchwork of state-level biometric privacy laws, that stream of faces, license plates, and behavioral data is a compliance liability in motion.

Edge AI doesn’t eliminate that conversation. But it dramatically changes it.

When processing happens on-chip, you can apply dynamic privacy masking before anything leaves the device. Faces can be pixelated at the source. Biometric data can be processed locally and discarded without ever hitting a cloud endpoint. You get the intelligence without the exposure.

Smart city deployments, healthcare campuses, K-12 schools, corporate headquarters. Any environment where you’re recording identifiable individuals in regulated spaces should be asking this question on day one, not after a breach.

What Video Language Models Mean for Your Building Next Year

Pay close attention to this one, because it’s moving faster than most enterprise buyers realize.

Classic AI security cameras were trained to detect and classify: person, vehicle, package, weapon. Useful — but brittle. If you didn’t train for it, the camera can’t see it.

Video Language Models (VLMs) are the next frontier. Think of it as giving your camera the reasoning capability of a ChatGPT-style system. But running on video, in real time. Instead of detecting a predefined object class, you can query the system: “Alert me when behavior in this zone looks consistent with a physical altercation.” Or: “Flag any vehicle that lingers in this lane for more than 90 seconds.”

That’s not a feature set. That’s a fundamentally different category of security intelligence.

At ISC West 2026, we saw early evidence of VLMs being integrated directly into edge SoCs. Meaning that reasoning capability is being pushed to the device itself, not reserved for the cloud. The implications for false alarm reduction alone make this worth tracking closely.

Talk to Your Network Team Before Your Integrator

Edge AI reduces cloud bandwidth dependency. But a camera running complex on-device inference still needs clean network architecture for management, firmware updates, and VMS integration. Loop in your network and security teams early. Ask vendors specifically about VLAN segmentation support, encrypted management traffic, and whether the device has been through any third-party cybersecurity certification. In 2026, a camera is a network endpoint first.

The Question You Should Be Asking Every Camera Vendor Right Now

Before your next refresh cycle, before your next integrator meeting, there is one question that cuts through the noise:

“Show me the architecture diagram for where AI inference happens. And what your device does when it loses cloud connectivity.”

If the answer is a long pause followed by a brochure, you’re looking at a cloud-dependent system dressed up in AI marketing language.

The vendors worth your time. Whether that’s Hailo-powered camera manufacturers or established players building edge inference into their own silicon. Because they built the product around that question.

The shift from cloud-dependent to edge-native intelligence isn’t a feature upgrade. It’s a fundamental rethinking of where security systems are trustworthy.

Your building deserves cameras that can think for themselves.

Tim Albright is the founder of AVNation and is the driving force behind the AVNation network. He carries the InfoComm CTS, a B.S. from Greenville College and is pursuing an M.S. in Mass Communications from Southern Illinois University at Edwardsville. When not steering the AVNation ship, Tim has spent his career designing systems for churches both large and small, Fortune 500 companies, and education facilities.