The audiovisual industry loves a good innovation cycle. From analog to digital, hardware to software, we’ve thrived on transformation. But the rise of artificial intelligence is forcing a new kind of reckoning. One not driven by capability, but by conscience. AI and AV security are the next round of challenges we are going to have to face.

In the race to automate, integrate, and “intelligize” our systems, we’re being confronted with a harder question: How secure are we making the spaces we design?

The Temptation of AI Everywhere

At first glance, the latest round of AI-driven updates from Cisco and others feels like progress. Smarter rooms. Self-adjusting mics. Note-taking bots. Agentic systems that don’t just assist but act.

For IT and AV managers, that promise is seductive. Automation that handles repetitive tasks and improves collaboration without extra headcount. But as we discussed on AVWeek episode 737, convenience can sometimes conceal risk.

Jason Haynie, who works in the financial sector, put it bluntly:

“AI is the future. It’s going to run everything here pretty soon. But once that data leaves the premises, we lose control. The integrator loses control. The client loses control.”

That’s the pivot point for AV today. Every AI feature we embrace, from people counting to meeting summaries, has to answer a deeper question about where and how it processes information.

Security Must Be the Foundation

AV designer Kristin Bidwell didn’t mince words about where the industry has fallen short.

“Something the AV industry has not been very good at is security,” she said. “It’s always been an afterthought. Something we talk about only when something goes wrong. But for Fortune 500 companies, it’s at the forefront.”

Her comment speaks to a long-standing cultural gap between AV and IT. While IT frameworks operate on zero-trust models and unified device policies, many AV systems still rely on isolated components and legacy configurations. As AI features creep into endpoints, that gap becomes an open invitation for risk.

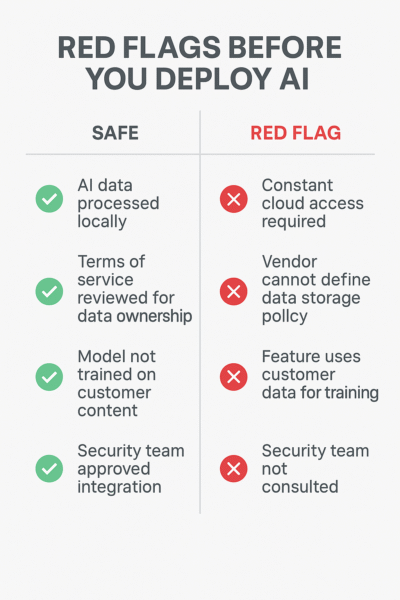

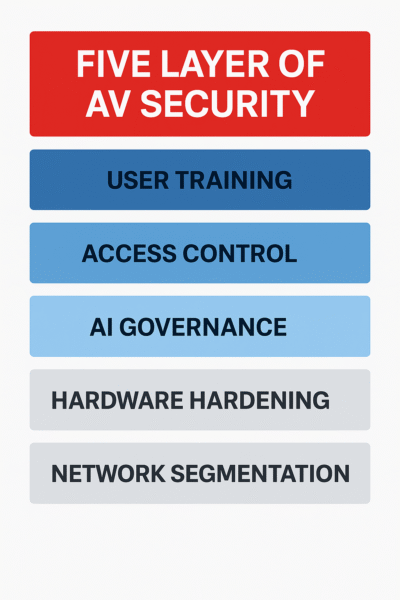

Bidwell argued that before any firm embraces AI tools, they must define what they’re using them for, and where the data goes next. That means internal policy, user training, and clear boundaries about intellectual property.

“If I use AI to build something, I probably don’t own it anymore,” she warned. “That’s a huge issue for design teams and clients alike.”

Training, Policy, and the Human Factor

For Kelly Teel, the fix starts not in firmware or APIs, but in user behavior.

“We educate our users on what’s safe to discuss or show when AI recording is active,” she explained. “Even if the tool promises data deletion, you can’t assume that means it’s gone. We label rooms where AI is active and tell users to avoid sharing proprietary files.”

Teel’s approach mirrors a growing trend among forward-thinking firms: developing internal “AI hygiene” protocols. These range from local processing requirements to watermarking transcripts generated by AI, all aimed at protecting intellectual property and ensuring compliance.

Her bottom line was simple and telling:

“You can secure the hardware, but it all comes down to user training and understanding. That’s where the real vulnerability lies.”

Local Processing, Trusted Systems

Haynie echoed that sentiment from an end-user standpoint, emphasizing a practical path forward: keep AI as close to the edge as possible.

“I’m quicker to adopt something where the AI agent lives in the device on my network,” he said. “If it’s all happening in the box, it’s a lot easier to get approval. The minute that data leaves the network, red flags go up.”

His company’s zero-trust approach is increasingly common among organizations that manage sensitive data. New AI features, especially those embedded in meeting platforms and conferencing gear, are now evaluated through the same risk framework as any third-party SaaS vendor.

It’s not enough to know what a tool does. AV managers must know where its intelligence lives.

The Industry’s Growing Divide

The episode made one thing clear: the AV industry is splitting into two camps. On one side are manufacturers racing to bake AI into every endpoint, often without fully accounting for the security implications. On the other are the designers, consultants, and IT teams trying to build guardrails fast enough to keep up.

And somewhere in the middle sits the integrator. Asked to deliver both.

As I said during the discussion:

“I only wish the industry would be as excited about security as it has been about AI.”

Because the two are now inseparable. AI may enhance collaboration, automate diagnostics, or even reduce operational costs. Without trust, none of those benefits will matter.

A Reckoning Worth Having

The next phase of AI in AV won’t be defined by who rolls out the flashiest features, but by who builds the most secure ecosystems.

It will require manufacturers to treat data privacy as a core product feature, not a line item. It will push integrators to include cybersecurity in every proposal. And it will demand that designers and end users, like Bidwell, Teel, and Haynie, keep asking the uncomfortable questions, not after the system is installed, but before it’s even specified.

AI isn’t just changing how our systems think. It’s changing how we must think. About trust, ownership, and responsibility.

And that may be the most important innovation of all.

Listen to the full Episode of AVWeek 737

Tim Albright is the founder of AVNation and is the driving force behind the AVNation network. He carries the InfoComm CTS, a B.S. from Greenville College and is pursuing an M.S. in Mass Communications from Southern Illinois University at Edwardsville. When not steering the AVNation ship, Tim has spent his career designing systems for churches both large and small, Fortune 500 companies, and education facilities.